Rambus announces an HBM4E Memory Controller IP, with features that enable designers to address the demanding memory bandwidth requirements of next-generation AI accelerators and graphics processing units (GPUs).

The HBM4E Controller supports operation to 16Gbps per pin, providing a throughput of 4.1TB/s to each memory device. For an AI accelerator with eight attached HBM4E devices, this exceeds 32TB/s of memory bandwidth for AI workloads.

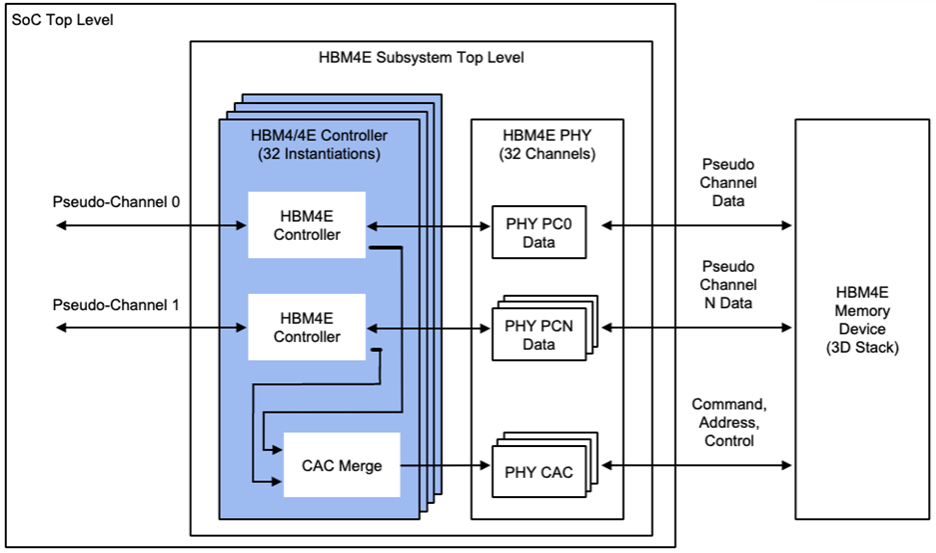

The HBM4E Controller IP can be paired with third-party standard or TSV PHY solutions to develop a complete HBM4E memory subsystem in a 2.5D or 3D package as part of AI SoCs or custom base die solution.

The Rambus HBM4E Controller is available for licensing, and early access design customers can engage today. Find out more here https://www.rambus.com/interface-ip/hbm/.