The integration of artificial intelligence (AI) and machine learning (ML) into embedded systems is revolutionising industries and systems through enabling smarter, more adaptive devices. However, embedding AI/ML into safety-critical systems presents unique challenges beyond the high stakes of failure and stringent compliance requirements.

Embedded systems often operate in harsh environments, like extreme temperatures, vibrating environments, or even underwater. They are also required to adhere to strict energy, memory and computational limits, where there’s no room for a bulky computer chip or a cooling fan. Equally, many embedded systems run on batteries that need to last years. Yet, AI models – especially large ones – use a lot of power.

All these parameters make running AI in embedded systems challenging. To make it fit, developers use strategies like pruning (removing less important neural pathways) and quantisation (compressing numerical data into low-bit formats), to reduce model size and computational overhead whilst preserving system performance.

In safety-critical systems there are the added requirements of determinism, certifiability and resilience. Safety-critical systems, like automotive braking or flight controls, for example, require deterministic behaviour, consistent, predictable outputs for given inputs within guaranteed time frames. However, AI/ML models (especially neural networks) are inherently probabilistic and often exhibit non-deterministic outputs.

Safety-critical systems must also comply with stringent certification standards (e.g., ISO 26262 for automotive, IEC 62304 for medical devices, yet, AI/ML models are largely “black boxes” with opaque decision-making processes, making it difficult to trace decisions and prove system robustness. Regulators demand evidence that outputs derive from verifiable logic, not uninterpretable statistical patterns, which is certifiability.

Embedded systems often operate in uncontrolled environments (e.g., industrial robots, drones) and face adversarial attacks such as malicious inputs designed to trick ML models (e.g., perturbed sensor data causing misclassification).

To address these unique demands, engineers turn to strategies to balance performance with reliability. Determinism is enforced by freezing trained models (locking weights to prevent runtime drift) and using static memory allocation to eliminate timing variability, ensuring predictable, real-time responses. Certifiability is tackled through hybrid architectures, pairing neural networks with rule-based guardrails, and formal verification methods to mathematically bound model behaviour, enabling compliance with standards like ISO 26262 or FDA guidelines.

For resilience against adversarial conditions, models are hardened via adversarial training (exposing them to perturbed data during development) and input sanitisation techniques (e.g., noise filtering), while runtime monitors track anomalies like sudden confidence drops to flag potential attacks. Secure update protocols (e.g., cryptographically signed OTA patches) and redundancy (e.g., voting systems across multiple models) further mitigate risks, creating layered defenses that align AI/ML flexibility with embedded systems’ rigid safety and security requirements.

Advancements in AI/ML for embedded systems

Techniques like model compressions (pruning, quantisation, etc.) help to shrink AI/ML models without sacrificing accuracy. Coupled with specialised hardware like neuromorphic chips and ultra-efficient AI accelerators, these innovations are transforming embedded systems.

Pruning and quantisation are two of the most widely adopted and effective methods for neural network or AI model compression. Pruning strips away redundant or non-critical components from neural networks. For example, a model trained to recognize objects might initially have 10,000 connections between its artificial neurons. By analyzing which connections contribute least to accurate predictions (e.g., those with near-zero weights), engineers can remove 40% of them, resulting in a model that uses 60% of the original computational resources yet retaining 95% performance.

Quantisation addresses a fundamental challenge in embedded AI: the inefficiency of high-precision calculations. Neural networks typically represent parameters (weights and activations) as 32-bit floating-point numbers, format more suitable for supercomputer analysis than a smartwatch. Quantisation simplifies this by shrinking the “format” of numbers the model uses. For instance, converting 32-bit values to 8-bit integers reduces memory usage by 75% and accelerates computations, as smaller numbers require fewer processing cycles. This precision trade-off is carefully calibrated, like compressing a high-resolution photo to a smaller file while retaining enough detail to recognise faces.

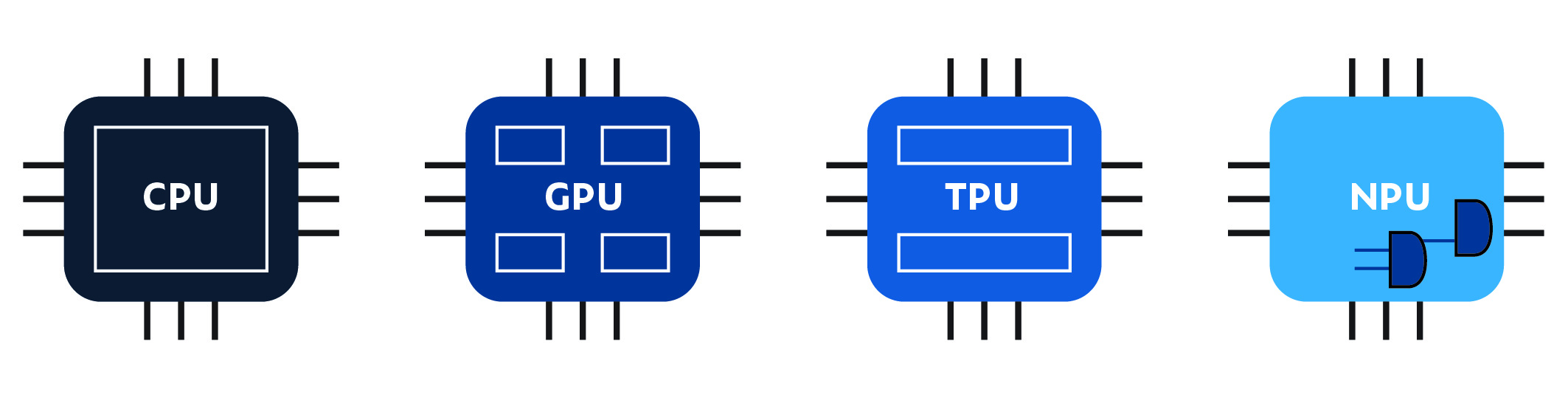

In addition to model compression methods, running AI in embedded systems is further helped by hardware advancements, such as graphic processing units (GPUs), tensor processing units (TPUs) and neural processing units (NPUs). General-purpose CPUs, while versatile, struggle to handle the intense computational demands of modern AI models on energy-constrained devices. This gap has spurred a wave of domain-specific architectures, chips designed not for broad computing tasks but to accelerate neural networks with much greater efficiency yet lower power consumption. NPUs, unlike CPUs, excel at parallelised matrix multiplications, executing thousands of operations simultaneously with minimal power.

Addressing safety and compliance

Using AI/ML in real-time, safety-critical embedded systems (e.g., autonomous vehicles, medical devices, industrial robots) is challenging due to these models’ inherent non-determinism. Their behaviour can change with different inputs or configurations, raising the potential for unpredictability. A common approach to mitigate this risk is to convert the model to a static and unmodifiable model after training. This is a critical step for ensuring determinism in safety-critical systems and is commonly known as “freezing the model”.

Take for example automotive manufacturers designing an AI system for something really high-stakes, like a self-driving car. Lives depend on it behaving exactly the same way every single time so there are no surprises. But AI models, especially neural networks, can sometimes act inconsistently: a bit slower one day because the hardware got warm, or they round a number differently on a GPU versus a processor. This is safety engineer’s nightmare.

Freezing the model, in effect, “locks” every connection, weight and math operation, disabling further tweaks or updates. Tesla’s Autopilot is a good example. Its lane-detection AI uses frozen neural networks, proving to regulators (ISO 26262, etc.) that the system behaves exactly as tested, in every car, under all conditions. If Tesla didn’t freeze the model, a software update or hardware quirk might subtly change how the car “sees” the road, which will invalidate the regulatory requirements. Freezing allow Tesla to say: “We’ve certified this version, and it won’t drift over time”.

However, freezing alone isn’t enough to pass certifications. Imagine freezing a model that uses 32-bit floating-point numbers. Even tiny differences in how chips handle decimals could cause inconsistencies, like a medical robot calculating a 0.1-millimeter incision as 0.1000001 one day and 0.0999999 the next. For certifications, that’s unacceptable: They want to know exactly what’s running on the device. A frozen model becomes a static file, say, a .tflite file (TensorFlow Lite model) or .onnx file (Open Neural Network Exchange model), that engineers can hand to auditors and say: “Here’s the AI, line by line, byte by byte. Test it once, and it’ll behave the same way in all 10 million cars”. Tesla’s frozen models, for instance, are baked into their firmware updates, which undergo rigorous ASIL-D (the strictest automotive safety level) validation.

But even with freezing, guardrails are still needed. A frozen AI might still make a wild guess if it sees something totally new, like a self-driving car encountering a chair in the middle of the highway. That’s why systems pair frozen models with rule-based fallbacks (e.g., “if the AI’s confidence drops below 95%, then slam the brakes”) and runtime monitors that cross-check outputs against physics or common sense.

Traditional verification in AI-enabled embedded systems

Embedded safety-critical systems that incorporate AI components must still adhere to foundational verification and validation practices, including static analysis, unit testing, code coverage and traceability. These practices remain critical to ensuring system integrity, regulatory compliance and safety, though their implementation may require adaptation to address the unique challenges posed by AI.

- Static analysis retains its importance even in AI-driven systems. While traditional code, such as control logic, sensor interfaces and safety monitors, can be rigorously analyzed using established tools to detect vulnerabilities or coding standard violations, AI components (e.g., neural networks) introduce new complexities. Emerging tools are beginning to address AI-specific risks, such as unsafe operators in frameworks like TensorFlow or PyTorch, but the field remains in its infancy. Static analysis must therefore evolve to encompass both conventional code and AI framework configurations, ensuring compliance with safety standards like MISRA C/C++ or CERT C/C++.

- Unit testing continues to validate low-level functionality, particularly for non-AI components such as communication protocols, fault handlers and hardware interfaces. However, AI models, which derive behaviour from data rather than explicit code, demand a shift toward data-driven validation. This includes testing for edge cases, adversarial inputs and scenario coverage. While the AI model itself may not be amenable to traditional unit tests, the surrounding code, such as data preprocessing pipelines, inference engines and output handlers, must still undergo rigorous unit testing to isolate and mitigate defects.

- Code coverage metrics, such as modified condition/decision coverage (MC/DC), remain mandatory for certification in domains like aviation (DO-178C) or automotive (ISO 26262). These metrics ensure exhaustive testing of decision logic in conventional code. For AI components, traditional code coverage is not directly applicable, but analogous concepts are emerging. Techniques like input space coverage and neuron activation coverage aim to quantify the sufficiency of testing for neural networks, ensuring that diverse scenarios and model behaviours are exercised during validation.

- Requirements traceability is non-negotiable in safety-critical systems, as it provides an auditable chain from system requirements to implementation and testing. AI complicates this process due to its data-driven, often opaque decision-making. To maintain traceability, practices such as explainable AI (XAI), model introspection, and thorough documentation of training data, model architecture and validation results are essential. For example, a requirement such as “the system shall detect obstacles in low-light conditions” must map not only to sensor code but also to the AI model’s training data diversity and robustness testing.

- Regulatory frameworks (e.g., IEC 62304 for medical devices) implicitly mandate these practices, regardless of AI involvement. Hybrid approaches that combine classical verification with AI-specific methods, such as adversarial testing, runtime monitoring and bias detection, are necessary to address both traditional software risks (e.g., memory leaks) and AI-specific failure modes (e.g., dataset bias). Furthermore, system-level safety depends on the seamless integration of AI and non-AI components; flaws in either can cascade into catastrophic failures.

While AI introduces novel challenges, traditional verification practices remain indispensable. The solution lies in augmenting these practices with AI-aware techniques, ensuring comprehensive coverage of both code and model risks. By maintaining rigorous static analysis, testing, coverage and traceability whilst adapting tools and methods to address AI’s unique demands, developers can achieve the robustness required for safety-critical certification and operational deployment.

By Ricardo Camacho, Director of Safety & Security Compliance, Parasoft