Edge AI only becomes meaningful when it leaves the lab and operates reliably in the field.

In real deployments, system architects quickly face constraints that go far beyond raw computing performance. Latency must be predictable, power budgets are limited, connectivity is not always guaranteed, and systems are expected to run continuously for years in harsh environments.

At Aetina, Edge AI is approached as a system-level challenge rather than a standalone computing problem. By combining NVIDIA® Jetson™ platforms with industrial-grade hardware design, optimized software stacks, and deployment experience, Aetina delivers edge AI devices that are already operating in traffic infrastructure, smart agriculture, industrial inspection, and intelligent buildings. With the upcoming launch of NVIDIA® Jetson Thor™, this approach naturally extends toward more advanced and autonomous edge systems.

From NVIDIA Jetson Modules to Aetina Edge AI Devices

The NVIDIA Jetson platform provides the accelerated computing foundation for edge AI, but turning a Jetson module into a deployable product requires additional engineering. Power stability, thermal behavior, enclosure design, I/O flexibility, and long-term availability all influence whether a system can survive outside controlled environments.

Aetina’s DeviceEdge portfolio addresses these requirements by offering complete systems built on NVIDIA Jetson Orin™ platforms and prepared for future Jetson Thor designs. These systems are validated for continuous operation, wide temperature ranges, and integration into existing infrastructure, allowing customers to focus on AI application development rather than hardware constraints.

Understanding Performance at the Edge, What TOPS Really Means

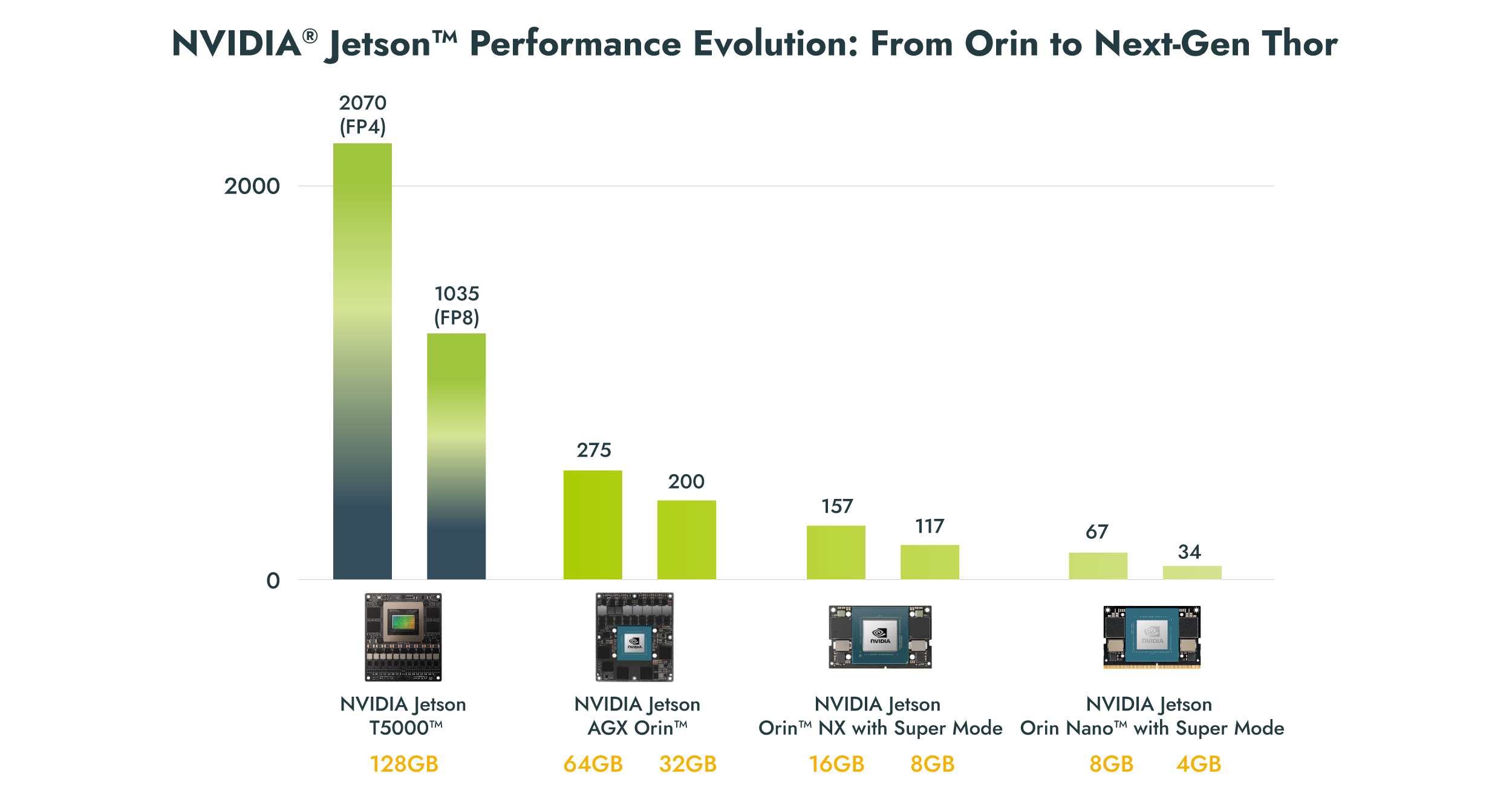

When comparing edge AI platforms, performance is often expressed in TOPS, or Trillions of Operations Per Second. TOPS is a practical indicator of how many AI operations a processor can execute per second, and it directly affects how many models, camera streams, or AI tasks can run simultaneously.

In real deployments, higher TOPS enables more complex models, higher input resolution, multi-stream processing, and lower latency. However, TOPS alone does not guarantee system performance. Efficient use of frameworks such as NVIDIA CUDA® and TensorRT™ is essential to translate theoretical performance into real-world results.

High-Performance Traffic Monitoring with NVIDIA Jetson AGX Orin™

Traffic monitoring and enforcement remain one of the most demanding AI use cases. Systems must process multiple high-resolution video streams in real time, often under extreme weather conditions, while maintaining high accuracy and uptime.

Aetina’s AIE-PX11, AIE-PX12, AIE-PX21, and AIE-PX22, powered by NVIDIA Jetson AGX Orin, are widely deployed in intelligent traffic projects across Europe. Depending on configuration, Jetson AGX Orin delivers up to 275 TOPS, making it suitable for heavy vision workloads such as license plate recognition, vehicle classification, speed monitoring, and incident detection.

These systems leverage NVIDIA CUDA for parallel processing, TensorRT for low-latency inference, and DeepStream to manage multiple video pipelines efficiently. By running inference locally, the systems avoid cloud dependency, reduce latency, and improve data privacy. In practice, this results in scalable and cost-effective traffic solutions that improve road safety and operational efficiency.

Smart Agriculture with NVIDIA Jetson Orin NX, Performance in a Compact Footprint

Agricultural AI systems often operate in remote locations with limited connectivity. Decisions such as disease detection and crop treatment must be taken directly in the field, making edge inference essential.

Aetina’s AIE-KN32-S2 and AIE-KN42-S2 systems, based on NVIDIA Jetson Orin™ NX, are designed for these scenarios. Jetson Orin NX delivers up to 157 TOPS with the newer Jetson Orin NX models and optimized configurations using Super Mode, this performance can be pushed further while maintaining a compact footprint.

In AI-powered plant health monitoring deployments, cameras capture detailed images of crops, and deep learning models analyze leaf texture, color, and patterns to detect diseases early. Models trained using PyTorch are optimized with TensorRT before deployment, enabling real-time inference on site. This approach eliminates the need for laboratory testing and allows faster intervention, improving yield and reducing waste.

Compact Intelligence with NVIDIA Jetson Orin Nano with Super Mode

Not every edge AI application requires high compute density. In smart buildings, access control, and localized analytics, system size, power consumption, and cost are often the primary constraints.

Aetina’s AIB-SO31, powered by NVIDIA Jetson Orin Nano™, targets these applications. Jetson Orin Nano provides up to 67 TOPS, making it suitable for entry-level vision tasks such as people counting, basic object detection, and flow analysis.

With the introduction of NVIDIA JetPack™ 6.2, Jetson Orin Nano can operate with Super Mode, unlocking additional performance without hardware changes. This allows developers to deploy slightly more demanding models or increase input resolution while maintaining low power consumption. The same CUDA and TensorRT stack used on higher-end systems ensures software consistency across platforms.

Industrial Inspection and Machine Vision

In industrial inspection, AI systems must handle real-time data processing while maintaining robustness against vibration, dust, and temperature variations. Latency directly impacts production throughput and quality control.

Aetina deploys Jetson AGX Orin-based systems for advanced visual and ultrasonic inspection, where AI models analyze sensor data continuously to detect defects and anomalies. CUDA accelerates data processing pipelines, while TensorRT ensures deterministic inference performance. Processing data locally improves responsiveness and reduces dependency on centralized infrastructure, which is often impractical in industrial environments.

Preparing for the Next Step with Jetson Thor

While most current deployments rely on Jetson Orin Nano, Orin NX, and AGX Orin, Aetina is actively preparing for the next generation of edge AI systems with NVIDIA Jetson Thor models AIB-AT78/AT68, scheduled to launch in March.

NVIDIA Jetson Thor is designed for applications that exceed today’s edge AI requirements, including autonomous machines, advanced robotics, and large-scale sensor fusion systems. With AI performance reaching up to 2,070 FP4 TFLOPS class, a metric aligned with modern transformer-based AI models and generative workloads, higher memory bandwidth, and high-speed networking support, Jetson Thor enables systems that combine perception, planning, and control in real time.

For Aetina, NVIDIA Jetson Thor represents a natural extension of the DeviceEdge portfolio. Experience gained from deploying NVIDIA Jetson Orin-powered systems directly translates into Jetson Thor-powered designs, providing customers with a scalable path toward higher autonomy.

Software Continuity Across the Portfolio

Across all Aetina edge AI devices, software continuity is a key advantage. CUDA, TensorRT, DeepStream, and mainstream frameworks such as PyTorch and TensorFlow form a consistent stack from NVIDIA Jetson Orin Nano to Jetson AGX Orin and Jetson Thor.

This allows developers to prototype on entry-level systems and scale to higher-performance platforms with minimal rework. Combined with Aetina’s industrial-grade hardware design and long-term support, this approach reduces deployment risk and accelerates time to market.

Practical Platform Selection Guide

| Application Type | Suggested NVIDIA Jetson Module | Consideration |

| Basic Vision Tasks | Jetson Orin Nano | Entry-level AI, compact and power efficient |

| Compact Mobility (AGV, Unmanned devices) | Jetson Orin NX 8GB / 16GB | Better performance with small footprint |

| Heavy Vision AI Workloads | Jetson AGX Orin 32GB / 64GB | Best-in-class performance, advanced detection and segmentation |

| Smart Farming / Food Sorting | Jetson AGX Orin 32GB / 64GB | High reliability, multiple input stream support |

Deployment Tips

- For deep learning training and model development, use NVIDIA Jetson AGX Orin with full JetPack and CUDA support

- For low latency and real-time inference, always optimize models using TensorRT

- For low power and cost-sensitive deployments, Jetson Orin Nano is a strong entry point, especially with Super Mode enabled via JetPack 6.2

Turning Platforms into Real Solutions

The value of Edge AI is measured in deployment success, not benchmark numbers. By focusing on complete systems rather than standalone modules, Aetina bridges the gap between NVIDIA Jetson platforms and real-world applications.

From traffic infrastructure and agriculture to smart buildings and industrial inspection, Aetina’s edge AI devices demonstrate how Jetson technology can be transformed into reliable, scalable solutions. With Jetson Thor on the horizon, this approach is set to support the next wave of autonomous and intelligent systems, where edge AI moves from supporting decisions to making them.

By FELIPE LEIVA, Technical Project Manager, Aetina